Product Strategy

The Manager Seat

Designing a Work OS where independent experts manage AI-assisted work

Role

Head of Product Design

Duration

Ongoing - early thesis to design partner v1

Team

Founding team (~10), Product, Design, Data, Strategy

Status (Apr 2026): 25 design partners · 18 weekly active · 5 heavy users (~2 hrs/day) · testing ICP assumptions

The starting question

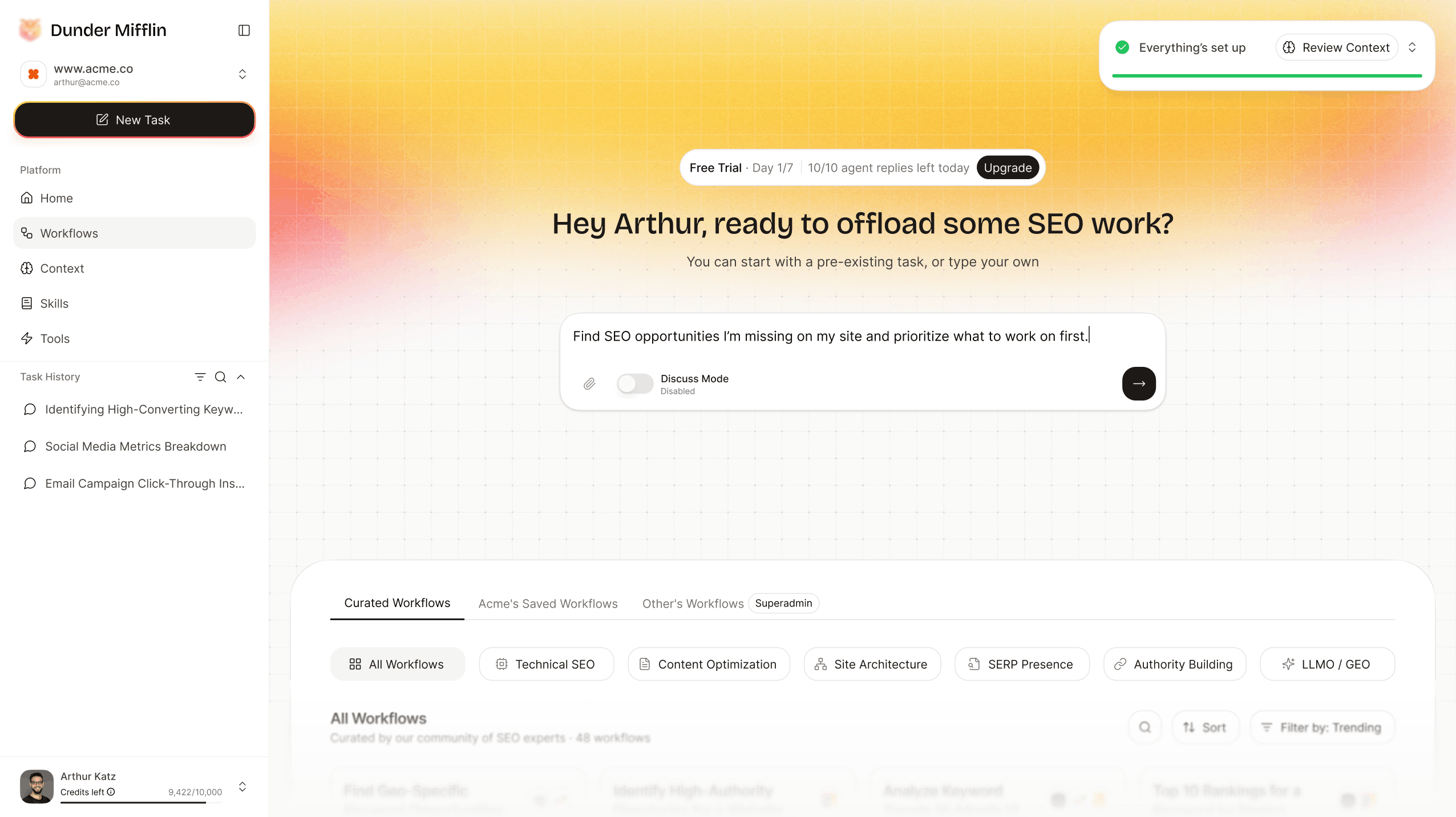

The product began as a chat experience for marketing professionals - a working SEO assistant with a natural-language interface, useful answers, and a growing user base.

But there was something quieter going on in the data. Power users were not coming back for another conversation. They were coming back for the outputs. They didn't want a smarter chatbot; they wanted a smarter morning.

That gap between answering questions and getting work done became the brief.

“When AI tools change weekly, users need the layer above the tools.”

This case study is about the journey from a chat product to a Work OS - the bets we made, the ones that worked, and the ones we revised. The conversation layer itself gets its own deeper treatment in A Chat Bubble Is Not a Workflow.

The first instinct, and why it was wrong

The first instinct was to make the chat smarter. Better prompts, more tools, longer context windows.

A working session with our second design partner reframed it. They had used the assistant to run a content gap analysis. The output was good. They had also opened it from a browser tab, between their SEO platform and a spreadsheet, and would forget to come back to it. The assistant lived nowhere.

The design tension surfaced in a single sentence:

The chat is the interface. The user has no workspace.

A smarter chat solved the wrong problem. The problem wasn't quality of answers - it was where the work lived.

What I owned

I led design end-to-end on the workspace direction - translating broad ambition into a concrete product surface, pattern set, and decision framework.

Concretely:

I did not own backend architecture, model orchestration, or the underlying agent runtime. I worked closely with the founding PM and the engineering lead, and the wedge call was a joint decision with leadership.

The five bets - what worked, what got revised

The product direction came down to five bets. Each one had a first version that was wrong, or a second version that improved on it.

Bet 1 - Pick a real role before we pick a feature set

A generic AI workspace can sound flexible. It also sounds like nothing.

The first internal pitches were horizontal: an AI workspace for “knowledge workers” or “experts.” I argued against it. Trust in AI products doesn't come from model quality alone - it comes from the product feeling like it understands the specific work.

We picked SEO and content as the wedge. Specific terminology, specific data sources, specific success criteria. A user could open the workspace and immediately recognise their day.

What worked: design partner activation jumped meaningfully once the workspace stopped looking like ChatGPT-with-extras and started looking like an SEO tool that thought.

What I got wrong: the wedge was initially scoped too tightly to technical SEO. Our first three partners were all generalist marketers who needed the assistant to flex into content audits, competitor work, and reporting too. We rebuilt the workflow library in week six to span the wider marketing surface.

V1 scope expanded after Design Partner #2 feedback (rebuilt week 6)

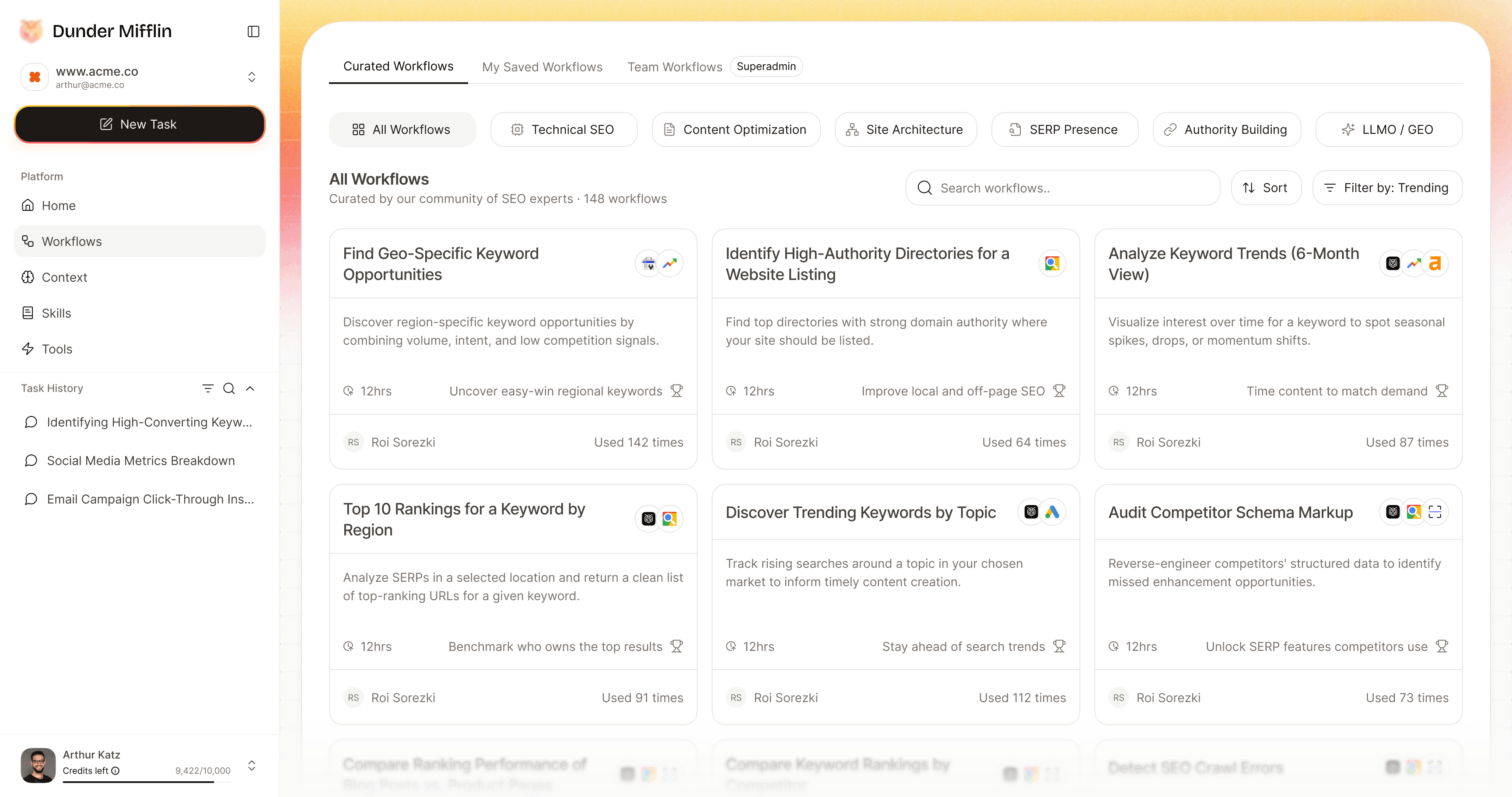

Bet 2 - Workflow discovery is a UX problem, not a content problem

Most automation products assume users already know what to automate. Partner conversations kept producing the opposite signal:

“I know I'm wasting time. I don't know what to automate.”

The first version of our workflow library was a flat searchable list. It tested poorly - partners scrolled, got overwhelmed, and went back to chat. The list felt like an app store, and they weren't shopping.

We rebuilt it as a workflow surface - workflows organised by the moment in their week when they're useful. Monday morning reporting. Pre-meeting research. End-of-quarter audit. Each workflow showed: what it produces, what data it needs, how much time it saves, and a sample output before activation.

The question shifted from “figure out what to do” to “choose what to run.”

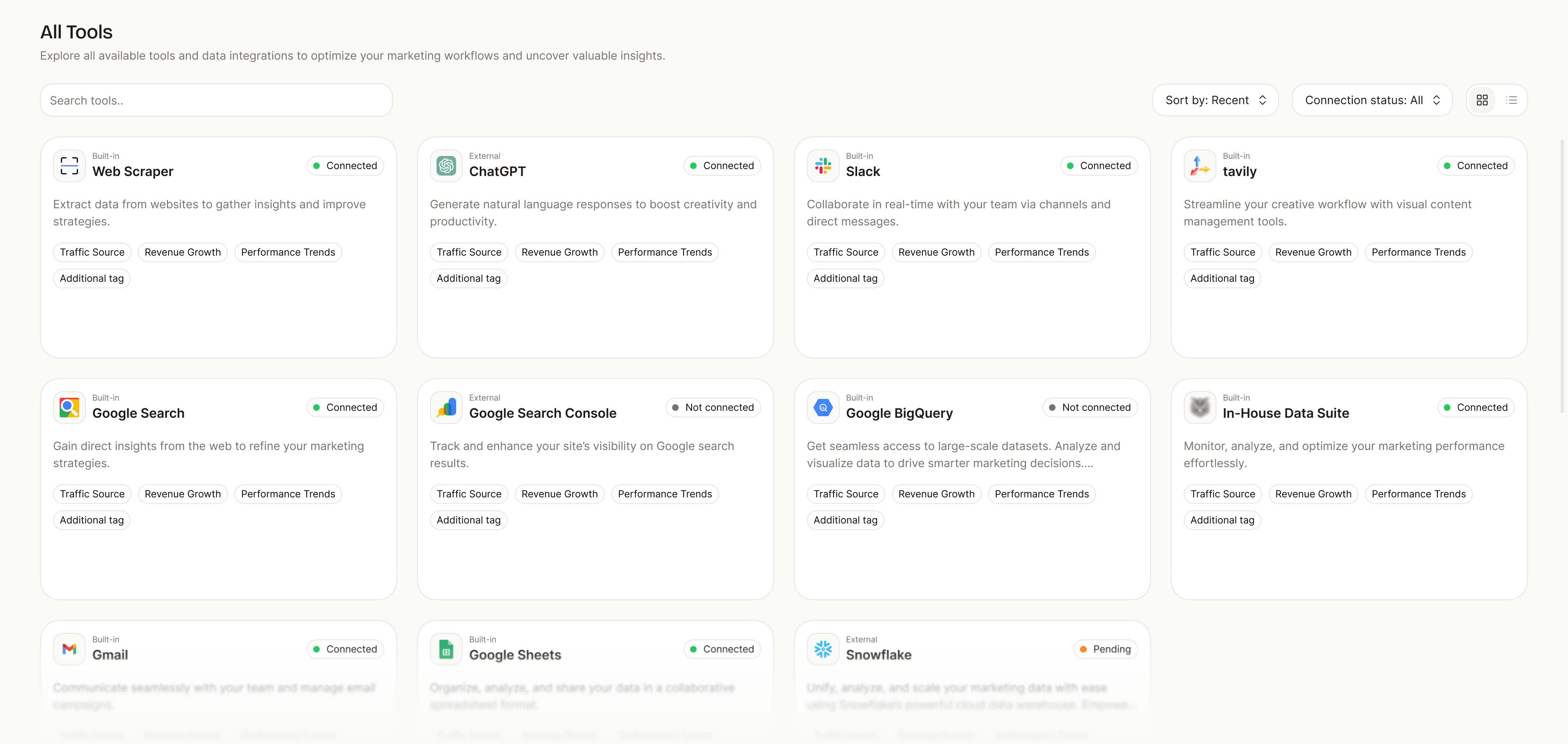

Bet 3 - Hide tool management, not control

The product was never about replacing every tool the user already has. It was about reducing the overhead of managing them.

A workflow might pull from Search Console, run an analysis, write to a doc, and ping Slack. The user shouldn't have to think about each connection. But they need visibility, permission, and the ability to swap one tool for another when something better appears.

The design rule:

“Tool management should disappear. User control should not.”

In practice, this meant:

Bet 4 - The user is in the manager seat, not the doer seat

This was the bet that shaped everything else.

The product is not a chatbot that does things on the user's behalf and asks forgiveness later. It's a workspace where the user briefs an AI teammate, supervises execution, reviews outputs, and intervenes when needed.

That belief affected:

A bad AI product says “trust me.” A better one shows: here's what I understood, here's what I'm going to do, here's where I am, and here's where I need you.

Bet 5 - Reusable work compounds

A single AI output saves time. A saved workflow creates leverage. A scheduled workflow becomes operations. A shareable workflow app becomes productized expertise.

That progression became part of the long-term product vision - particularly powerful for independent experts whose value is currently locked inside private processes (audits, decks, repeatable consulting work).

This is the bet I'm most uncertain about as a near-term reality. We've designed for it but haven't validated it yet - the workflow-app sharing layer is on the Q3 roadmap. I'd rather flag the uncertainty than pretend the bet has been resolved.

Product architecture

The Work OS sits on a small set of connected layers. Naming them clearly was part of the work - until we had shared language, every team conversation re-litigated the boundaries.

What's measurable today

I want to be specific about what's true now versus what we're aiming for.

Note: These are design partner metrics, not production metrics. We are testing ICP assumptions with varied usage patterns. Current status: Apr 2026. The product is in beta.

What changed because of this work

The clearest outcome is shared language. The team used “chat,” “workflow,” “agent,” “task,” and “tool” interchangeably for months. Pinning down the boundaries was design work too:

That sounds like semantics. It changed how we made roadmap decisions. “Should this be a tool or a workflow?” stopped being a stylistic question and started being a real design call.

The product also has a clearer external shape:

What I'd do differently

Two things, honestly.

The failure-state library should have come earlier. We treated failure modes as a v2 problem because the happy path was hard enough. By design partner 3 we were retrofitting the failure UI under pressure, and the experience suffered for two weeks while we caught up. (The failure model is now a real design surface - covered in CS2.)

I'd push harder on role-specific terminology earlier. I assumed our SEO partners would talk about “workflows” because we did. They didn't. They talked about “audits,” “reports,” “checks.” We changed the workflow library copy in week eight and activation improved. I should have run the terminology audit in week one.

Reflection

Not every product opportunity starts as a problem.

Sometimes a new capability appears before users have the language for it. AI is in that phase now - the technology moves first, behaviour follows, and good product design lives in the gap.

The interface for AI work doesn't get to be the chat bubble that started the conversation. It has to be a place that holds the work - context, judgment, review, responsibility, trust - without removing the expert from it.

That's the manager seat. A workspace where users brief AI teammates, supervise execution, reuse what works, and eventually package what's valuable for others.

The conversation layer that lives inside that workspace gets its own case study: A Chat Bubble Is Not a Workflow.